Or maybe the second word in the title should be “Shouldn’t”!

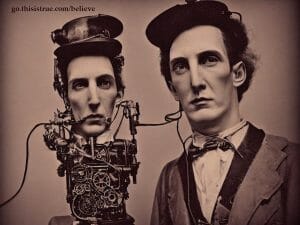

Cool photo, but it’s not real:

I told an AI “art generation program” called Stable Diffusion to make me a picture of a “purple house teetering on top of cliff.” (Top, bottom — what’s the difference?) That’s what I got. Actually, it was one of four that it generated in around 20 seconds.

While it doesn’t look quite right, it’s still somewhat plausible, especially if you don’t look carefully. (Click to see it larger.)

Here’s another example from the four generated at the same time:

It won’t be long until the photos are better, and anyone will be quickly and easily able to create any image desired.

In other words, you will no longer be able to believe your own eyes. 🙁

We’re already starting to see “deepfake” videos, too, with recognizable faces and the person’s voice, with lip movement to match what’s being said by whoever is manipulating the action. They can make anyone appear to be saying anything they want: you will no longer be able to believe your own ears, either.

Already, politicians have the ability to not just lie to your face, but to just show you fake things you can study closely so you can “make your own [completely wrong] conclusions.”

Not that any politician would actually lie to get what s/he wants though, right? Riiiiiiiight.

“Art”

Added Section: If these things are “art generators,” where is the art? The above images sure don’t qualify.

When AI is used to create artistic works guided by a human who writes a properly descriptive “prompt,” is the unique result creative? Absolutely. Is it (to use a legal term) copyrightable? Well, there’s a good question that has not definitively been answered by those who definitively answer such questions. No, not the U.S. Copyright Office (at the Library of Congress), but rather the courts.

The original arresting “photo” here came from my imagination …as described to a computer program (aka AI). I bought access from a company that provides the same sort of tool described above, so I have money into it. My prompt was “Steampunk Nicola Tesla portrait head and shoulders retro super-realistic, by HP Lovecraft, Cyberpunk, photo” (where “HP Lovecraft” is a style of art, even though he was a writer, not a graphical artist).

The first result was OK, but too freaky, if you can believe that. I added the “retro” and tried again, and got the amazing result you see here.

Copyright “is intended to protect the original expression of an idea in the form of a creative work, but not the idea itself” (Wikipedia, emphasis added). That definition doesn’t say anything about human creativity since that has been assumed from the start. Things have changed. (But hey, isn’t “my” artistic “photo” cool, and somehow plausible?!)

The work is also clearly very artistic and creative. Do I thus own the copyright? Unclear! But I claim so until I hear otherwise. The company I subscribed to explicitly avoids that question, saying that sure, I own the “commercial rights” to make what I want from it, from using it to illustrate this blog post to making T-shirts or whatever — and I’m reserving the much higher resolution version so that can be done well.

And, they say, it’s extremely unlikely that another customer, even if s/he used the exact same prompt that I used, would get the same result since there is also a random “seeding” process as part of the algorithm, and the AI model is or could be further trained by more images being loaded in and described (by subjective humans!) as to what each picture depicts, and then used to mix with other concepts in its output.

So is the creativity mine or the machine’s? And if some combination, is enough of it mine to be protected by copyright? And if AI is trained on copyrighted images, are the results the AI spits out “derivative works” thus protected by the original copyright, rather than being new works on their own? Yikes it gets complicated fast! You can be sure this whole concept is already wending its way to testing in the courts in the U.S. and elsewhere …says the guy who wrote the True Stella Awards!

Good Uses?

AI image manipulation can also be helpful, of course, and coincidentally I also recently bought some AI photo processing software. For instance, here’s a photo of me very early on in my NASA career in my first office at JPL, with my immediate project supervisor, Laura:

Before shrinking that image to 1200px, I ran it through Topaz Photo AI, set for Recover Faces, Sharpen, Remove Noise, and then reduced the image to 1200px wide, and saved it again:

“Recover Faces”? Yep: special algorithms trained on what faces look like so it can fill in missing details in a plausible way.

By reducing both of these examples to 1200px they both actually look pretty good, though you can probably tell that the second one is clearer. You again can click them to see larger, but how about the full resolution?

Well, that would be an awfully big image, so I just cut out a small part of it, and stuck the before and after into the same image:

Now you can clearly see the difference even before clicking to make it larger. A startling difference, but it’s not perfect: the upper-most hair isn’t clear, especially compared to other parts, and there is inconsistent recovery of the partition wall: it’s smooth on the right side, grainy on the left. (It’s fabric, so it’s really somewhere in between.) There is still noise in my neck area, and on the file cabinet drawer.

Still, it looks pretty good and realistic, right? You may wonder: will it work with Black faces too? Read on.

For snapshot use, it’s lovely to have a much clearer photo of myself and my now-long-time-friend Laura. But you can imagine that this technology could easily be used for nefarious purposes, eh?

Legit Editorial Use

Can this legitimately and responsibly be used for news editorial purposes?

Here’s one way how it might be. Control-click (to open it in a new tab) on my old blog post, The Few, The Proud, the Falsified News Story, for an example.

The point of that post is to prove that the purported widely-circulated story was a lie, that “Tyrone Jackson” (a made-up name to make it clear he’s Black; real name Tracey Attaway) was beaten by U.S. Marines after shoplifting at a Georgia store at Christmastime.

He was hospitalized, the fake story claims, “with two broken arms, a broken ankle, a broken leg, several missing teeth, possible broken ribs, multiple contusions, assorted lacerations, a broken nose and a broken jaw…injuries he sustained when he slipped and fell off of the curb after stabbing the Marine, according to a police report.”

But his booking photo (aka mug shot) shows that he’s perfectly healthy, and unbeaten, immediately after his arrest. The list of injuries is a complete fabrication.

The editorial problem? The best copy of his mug shot that I could obtain to help make that point is just 213x320px. While even with it that small it’s obvious he’s not been beaten, it would be a lot easier to see if it was larger — so I ran it through the Topaz software to make it much larger without making it look like a pixelized mess: 620x827px, or about 3x larger.

The result has an odd shadow on his left cheek (or is that a birthmark? In any case, it was clearly there on the small version too.), but it makes it even clearer that he was absolutely not severely beaten.

But how is this “responsible” use of image processing?

Because the manipulation is not only called out clearly in the caption, but the original image used is also included so the reader can inspect it for themselves. But then we can legitimately wonder, how many will actually be that transparent and accountable? Responsible publications will, but can we count on all publications being transparent and accountable? Well, no. Sadly, we cannot.

It Will Get Better (aka Worse)

There are often clues in manipulated photos that they are manipulated, things that just don’t look quite right. But even freely available (and even free) tools like Stable Diffusion (the free one I used for the purple house images is here), will improve over time.

It won’t be long until we can’t tell, and there are plenty of people who will take advantage of that. It is thus even more important to think about claims and get evidence from multiple sources before we make up our minds on a purported event.

Some of these AI generators can already take a photo as input, too, and then be manipulated by the software with just a plain-language command, such as “replace the person next to President Biden with Hilary Clinton” — or the terrorist of the week.

And it can do it in seconds; a few minutes for a video clip, with voices changed and saying anything the manipulator wants them to.

Truly, you shouldn’t believe your eyes. It’s coming sooner than you think: it’s already here for professionals, and is soon to be in the hands of everyone, maybe even for free.

I’ll be interested in your reactions in the comments.

– – –

Bad link? Broken image? Other problem on this page? Let Me Know, and thanks.

This page is an example of my style of “Thought-Provoking Entertainment”. This is True is an email newsletter that uses “weird news” as a vehicle to explore the human condition in an entertaining way. If that sounds good, click here to open a subscribe form.

To really support This is True, you’re invited to sign up for a subscription to the much-expanded Premium edition.

I have a large number of coworkers who travel frequently — and many have been in photographs with the POTUS when he visited one of our field offices (I have pictures of GW Bush, Clinton, Obama, but no Biden pics yet). I have also witnessed the artistry of our “photo guy in IT” as he deftly removed a tampon from the front pocket of a female coworker, removed the goofy floppy hat from a male coworker (and gave that fellow a lovely head of hair), and even added some blue sky and white clouds to a dreary grey skied day. I think the original photos were much more of a “keeper” than the retouched images.

—

Especially the tampon. Nice (inadvertent) touch! 😉 -rc

I’ve always thought that the next great war would be an information war, fought not by killing people but by fooling them into supporting your worldview.

—

Yep. -rc

It’s like I’m watching civil society produce the tools that will bring it to an end.

—

I hate to be repetitive, but Yep. -rc

My first encounter with faked photography was Hitler’s supposed dance at the news Paris had fallen, one step looped by a Canadian (see link below), so I’ve been aware of this potential for too many decades. This is why I tend not to buy into any news or pictures until various perspectives on an event have weighed in ~ especially involving current news from any conflict staging now.

I noticed in the enhanced photo a very familiar appearing calendar posted on the left-side bulletin board; it is similar if not identical to the one my parents had.

—

One of the nice things about the employee gift shop was they always had the new NASA Calendar available. (Pretty sure they weren’t just given to us….) I’m actually surprised no one has mentioned the T-shirt under my sport coat: a little hard to see, but it shows the Shuttle in orbit around Earth, and “NASA: Don’t Leave Home Without Us.” -rc

I was more interested in reading the Bloom County cartoon, myself… Followed by the cover of the document Laura is holding.

—

All I can tell is Oliver Wendell Jones is looking at stars, saying something profound. It would be fun to see it again. The book is “Practical Applications of a Space Station”. -rc

Thank you. Both of you, since we now have a partial answer.

Found the Bloom County: https://www.gocomics.com/bloomcounty/1987/01/18

—

YES! That’s it. Thanks, Yvonne! -rc

This has been building for a while and I agree with Randy that it is only going to get worse. The 2018 movie, “CAM”, imagines one particularly creepy possibility for the all-too-near future.

Let’s talk about Laura’s necklace, can we? Is that the solar system?

—

No, just a pleasing pattern of beads. -rc

Seeing the steampunk Tesla I am re-thinking the copyright. You can claim to own it until the AI that created it decides to sue you for it as it KNOWS who the real artist was. Afterall the AI did the “work”.

As you paid for the service of the AI in this case it should equate to buying a work of art from the artist themself. I think that should put you in the clear. Hmm this is opening a can of worms.

I see a lot of lawyers drooling over the endless litigation this can bring. Well that is until the AI is programed to be a lawyer as well and we know what that will lead to. I don’t see the “human lawyers” going down without a fight. An AI could easily pass the bar exam. Oh good times ahead!

—

Actually, equating it to buying from the artist doesn’t work the way you might think: they still own the copyright. So “can of worms” is right! -rc

Actually, my analogy is telling an artist how to paint your portrait. De-emphasize the double chin, lose the acne, fill out the hairline, less gray hair, etc. No matter how thorough your description, unless it’s specified in the contract (like patents belonging to your employer) you don’t own the copyright on the finished painting — the artist who actually painted it does.

Time for humanity to develop direct perception?

I doubted it could be done on an average laptop but a friend, using only one photo of his face managed to put himself rather believably onto the body of Jude Law in a short scene from The Young Pope.

—

Maybe somewhat difficult today, but the software (and computers) get evermore powerful and fast. -rc

Wow!!! Cool article! That perm though….

They won’t even need actors in a few years. Kind of scary actually.

—

Laura is my friend seen in my old work photo above. -rc

We have the technological power of gods but have put it into the hands of morons and assholes.

—

Pretty much humanity in a nutshell: brilliance + obliviots + jerks…. -rc

The pictures of the houses by the cliffs look like model RR buildings posed by actual rocks. As a model RR guy I can tell that the buildings are not real but the rocky terrain is very realistic.

—

Yeah, the structures were rendered pretty poorly. But remember that by now, that particular generation model is considered pretty outmoded. I ran it again today in a much newer (paid) engine, and inserted it in the bottom section of the post. -rc

The first salvo has been fired since this article:

US judge: Art created solely by artificial intelligence cannot be copyrighted.

It’s not the final word, and it doesn’t definitively answer the relationship between prompts and results, but it’s a start.